The digital landscape is shifting beneath our feet. We are no longer building simple applications where a button click reliably triggers a predictable outcome. We are entering an era of probabilistic systems–artificial intelligence models that don’t just execute code; they generate, reason, and adapt. For engineers and product managers accustomed to the binary world of traditional software development, this shift feels like stepping into a fog. You know the engine is running, but you can’t see the road ahead.

In this new paradigm, the concept of “monitoring” changes entirely. It is no longer enough to count error codes or track server uptime. When an AI system is deployed, it becomes a living entity that interacts with a chaotic, unpredictable world. It can hallucinate, it can drift, and it can behave differently depending on the input it receives.

The challenge for technical teams today is determining what to look at. Do you log everything? Do you alert on every anomaly? Or is there wisdom in looking away? The answer lies in distinguishing between the signal and the noise. This guide explores the architecture of AI observability, breaking down exactly what to log, how to define alerts, and the surprising benefits of ignoring the rest.

The Unfiltered Feed: Why You Need to Capture Every Interaction

Imagine trying to debug a complex conversation by only looking at the final sentence. You would have no idea how the logic arrived there. In the world of AI, the journey is just as important as the destination. To build a system that can stand up to production demands, you must establish a rigorous logging strategy that captures the “Unfiltered Feed” of interactions.

Traditional logging focuses on system health–CPU usage, memory leaks, database connection pools. AI monitoring, however, must focus on model health. This means capturing the inputs and the outputs in their rawest forms. When a user interacts with an AI-powered feature, you need to record the prompt, the context window, the parameters used (temperature, top-p, etc.), and the full response generated by the model.

Why is this necessary? Because AI models are probabilistic. A model might produce a correct answer 99% of the time, but that 1% failure rate can be catastrophic depending on the use case. Without raw logs, you cannot investigate why the model failed. Was it a hallucination? Was the context window too small? Was the user input ambiguous?

Furthermore, capturing this data creates the foundation for continuous improvement. As models are retrained or fine-tuned, you need a benchmark. This is often referred to as a “golden dataset”–a collection of past interactions that serve as a reference point for quality. Without these logs, you are flying blind, unable to measure the impact of your updates or the degradation of your model over time.

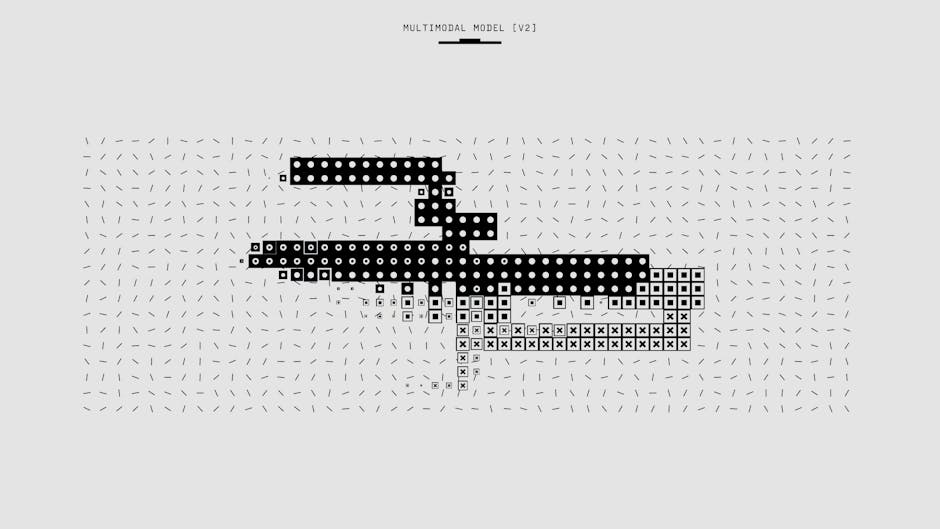

Photo by Google DeepMind on Pexels

Photo by Google DeepMind on Pexels

Beyond the raw data, you must log the metadata. Who sent the request? From which device? What was the latency? This context is crucial for identifying patterns. For instance, you might notice that the model performs poorly specifically on requests coming from mobile devices or specific geographic regions. This granular visibility allows for targeted fixes rather than broad, sweeping changes.

Don’t Scream at the Dashboard: The Art of Meaningful Alerting

Once you have a firehose of data pouring in, the natural instinct is to react to everything. If a light turns yellow, you slam on the brakes. But in AI systems, reacting to every fluctuation causes “alert fatigue,” where critical warnings are ignored simply because the noise is too loud.

The key to effective alerting is understanding that AI models are not static. They are dynamic systems that exist in a state of constant, subtle flux. Therefore, you cannot alert on absolute numbers; you must alert on deviations from established baselines.

The most critical metric to monitor is latency. In traditional software, a 100-millisecond delay is negligible. In an AI system, especially those relying on large language models or computer vision inference, latency can make or break the user experience. You should set up alerts for P95 and P99 latency. If the time it takes to process requests jumps from 1.5 seconds to 2.5 seconds, that is a signal that the system is struggling, whether due to load, a model inference bottleneck, or a database slowdown.

However, latency is only one piece of the puzzle. You must also monitor drift. Drift refers to the change in the model’s performance over time. This can be concept drift (the real world changes, and the model’s assumptions become outdated) or data drift (the distribution of inputs changes). For example, if you are running a sentiment analysis model on customer support tickets, the language customers use might evolve. If the model’s accuracy drops from 95% to 85% over a month, you need to know about it before it impacts customer satisfaction.

Finally, you must implement safety alerts. If your AI is designed to filter toxic content or prevent bias, you need a hard stop. If the filter fails to catch a violation, that is an immediate alert, regardless of the system’s overall health. These alerts should be “pager-friendly”–instant notifications that demand immediate human intervention.

Photo by Griffin Wooldridge on Pexels

Photo by Griffin Wooldridge on Pexels

The Art of Selective Blindness: What to Stop Worrying About

Here is a counter-intuitive truth of AI engineering: sometimes, the best way to monitor your system is to stop monitoring it so closely. The “Signal in the Noise” philosophy suggests that you cannot and should not try to capture every single variable. If you do, you will be paralyzed by irrelevant data.

One of the biggest mistakes organizations make is obsessing over minor variations in output. AI models are stochastic; they don’t produce the exact same token sequence every time they are asked the same question. If you are running a creative writing tool, a model might generate a slightly different story opening on Tuesday than it did on Monday. While this might seem like a failure, it is actually a feature–it ensures variety and reduces the appearance of “robotic” repetition.

Therefore, you must define what constitutes an “acceptable variance.” If the output quality remains within your pre-defined quality gates, you should not generate an alert. Do not treat the model as a deterministic function; treat it as a probabilistic engine. Ignoring the minor, non-critical fluctuations allows your team to focus their energy on the actual problems–spikes in latency, catastrophic failures, and significant drops in accuracy.

Another area to ignore is the “chatter” of the system. In a distributed architecture, logs are generated at every layer: the load balancer, the API gateway, the model server, and the database. While you need to know that a request failed, you don’t always need to log the specific stack trace of every single API call in real-time. Implementing log aggregation and sampling can reduce the noise. Focus on the “why” rather than the “how” for every single interaction.

By practicing selective blindness, you protect your team’s mental bandwidth. You create a culture where alerts are trusted. When a real issue arises, your team will know to pay attention because they have trained themselves to filter out the background radiation of normal AI behavior.

Photo by Tima Miroshnichenko on Pexels

Photo by Tima Miroshnichenko on Pexels

Ready to Build a Safer System?

Navigating the world of AI monitoring is not about collecting more data; it is about collecting the right data. It is a delicate balance between the vigilance required to catch system failures and the wisdom to know when the system is simply doing its job.

By capturing the full interaction feed, you ensure that you have the forensic data needed to debug and improve. By defining clear, meaningful alerts for latency and drift, you protect the user experience and maintain trust. And by practicing selective blindness, you avoid the paralysis of alert fatigue.

The goal is not to control the AI completely, but to understand it. When you understand how your model behaves in the wild, you can deploy with confidence. You can iterate with purpose. And you can build systems that don’t just perform, but evolve.

The journey to AI observability is ongoing. Start small. Log your inputs and outputs. Set your latency thresholds. Define your noise floor. And as you grow more comfortable, you will find that you are no longer just watching the system–you are truly understanding it.

Suggested External Resources for Further Reading:

- O’Reilly Media - “Observability for Machine Learning”: A comprehensive deep dive into the specific metrics and techniques required for monitoring ML models in production.

- OpenAI Platform Documentation - “Best Practices for Logging”: Guidelines provided by a leading AI provider on how to handle prompt and response data responsibly.

- Datadog - “AI Observability” Guide: Practical advice on integrating AI monitoring into existing DevOps and monitoring stacks.

Suggested Internal Topics for Future Posts:

- “How to Build a Golden Dataset from Production Logs”

- “The Difference Between Concept Drift and Data Drift: A Practical Guide”

- “Implementing Guardrails: Preventing Hallucinations in LLMs”