In the world of modern software architecture, few concepts are as celebrated and as misunderstood as the webhook. Developers love them because they solve the problem of “polling”–constantly checking a server to see if something has changed. With a webhook, the server comes to you. It pushes data the moment it happens.

But there is a dark side to this elegance. Unlike a traditional REST API call, where a client sends a request and receives a response immediately, a webhook is inherently asynchronous. It is a “fire and forget” mechanism. You send the data, and you pray the other side receives it, processes it, and tells you they did.

If you treat webhooks like email, you will have a bad time. In this deep dive, we will explore why most webhook systems fail silently, and how to build a robust infrastructure that guarantees delivery without becoming a burden on your own servers.

Why Most Developers Treat Webhooks Like Email

The primary reason webhook systems fail is a mental model mismatch. When we build a system to send a webhook, we often treat it like sending an email. We assume that as long as the network is up and the server is running, the data will arrive.

However, email has a built-in retry mechanism and a status code system. A webhook is just a single HTTP POST request. If the client is down, the network drops the packet, or the receiving server is overloaded and rejects the request with a 503 Service Unavailable, your system has no way of knowing. The request is lost in the ether.

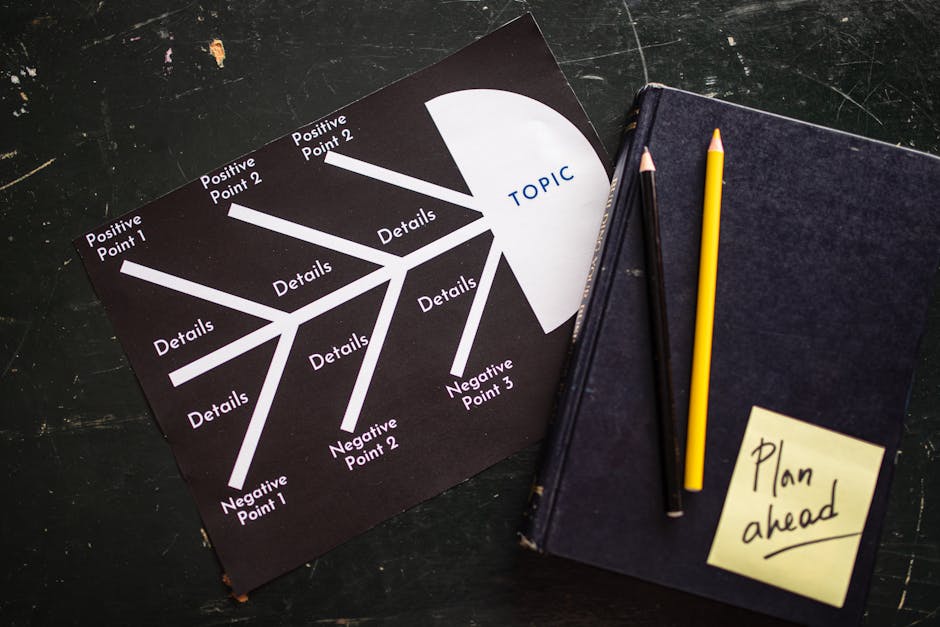

Photo by RDNE Stock project on Pexels

Photo by RDNE Stock project on Pexels

This lack of feedback is the root cause of most data loss in distributed systems. In a synchronous request-response model (like a REST API), the client gets an immediate 200 OK or 404 Not Found. In an asynchronous model, the client often moves on, assuming the job is done. If the receiver doesn’t reply, or replies with an error that the sender can’t parse, the data is gone forever.

To fix this, we must move away from the “fire and forget” mentality and adopt a strategy of delivery guarantees. This means the sender must be proactive, not reactive.

The Art of the Gentle Nudge: Retry Patterns

If you cannot rely on the network to be perfect, you must rely on your own resilience. The solution is a retry mechanism, but not just any retry mechanism. A naive retry (sending the request again immediately) often makes the problem worse.

Imagine a scenario where the receiver’s server is down. If you retry immediately, you will hit a 500 error every time. This consumes bandwidth, burns through API quotas, and adds load to an already failing system. This is known as a “thundering herd” effect, where multiple retries collide.

The industry standard for this is Exponential Backoff with Jitter.

How It Works

- Exponential Backoff: After the first failed attempt, you wait for a set amount of time (e.g., 1 second) before retrying. If that fails, you wait twice as long (2 seconds). Then 4 seconds, then 8 seconds. This gives the receiver time to recover and prevents a stampede of requests.

- Jitter: To prevent synchronized retries (where every system in a cluster retries at the exact same second), you add a random “jitter” to the wait time. If your backoff is 4 seconds, you might wait between 3.5 and 4.5 seconds. This randomizes the retry traffic, ensuring that not every system hits the server at the same moment.

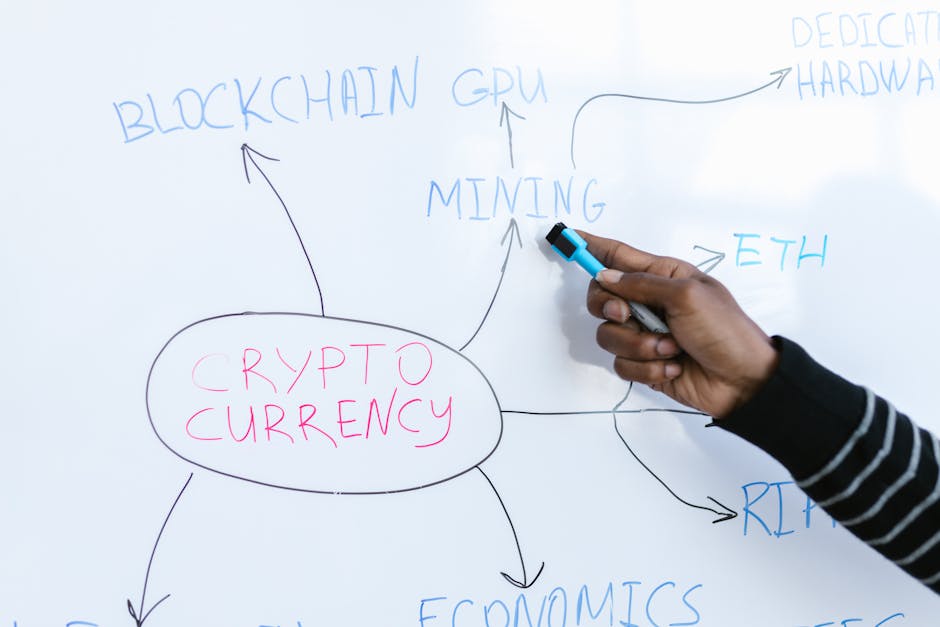

Photo by Monstera Production on Pexels

Photo by Monstera Production on Pexels

This pattern transforms a brittle system into a resilient one. It acknowledges that the network is imperfect and builds a safety buffer into the delivery logic. However, retries are only half the battle. If you retry indefinitely, you risk flooding your own database with duplicate transactions.

When Pushing Just Isn’t Enough: The Idempotency Key

Imagine a scenario: A payment gateway sends a webhook to your server to notify you of a successful transaction. Your network flaps, and your webhook provider retries the request 5 times. Your server processes the payment 5 times. The customer has been charged 5 times, but they only ordered one item.

This is a catastrophic failure. To prevent this, we must implement Idempotency.

Idempotency is the ability to make multiple identical requests with the same result without performing the action more than once. In the context of webhooks, this means that the receiver must be able to detect if it has already processed a specific event, regardless of how many times the sender retries it.

Implementing Idempotency on the Receiver

The most common implementation involves an Idempotency Key.

- Generation: The sender includes a unique key in the header of the webhook payload (e.g.,

Idempotency-Key: 550e8400-e29b-41d4-a716-446655440000). - Storage: The receiver stores this key and the state of the request in a database (Redis is a common choice for this due to speed).

- The Check: When the receiver receives a webhook, it first checks the database for the key.

- If found: The receiver returns a

200 OKimmediately, without processing the payload again. - If not found: The receiver processes the payload, saves the state associated with the key, and then returns the

200 OK.

- If found: The receiver returns a

This simple mechanism ensures that no matter how many times the webhook provider retries the request, your system only processes the transaction once. It turns a chaotic retry loop into a safe, idempotent operation.

The Safety Net: Dead Letter Queues and Monitoring

Even with exponential backoff and idempotency, there are edge cases where delivery is impossible. The receiver might be offline for days, or the payload might be malformed in a way that your system cannot recover from (a “poison pill”).

When a webhook fails after a certain number of retries (e.g., 10 attempts), you have exhausted your options. At this point, you must stop retrying to save resources.

This is where the Dead Letter Queue (DLQ) comes in. The DLQ is a special storage area for failed messages that have been retried but still cannot be delivered.

Why You Need a DLQ

A DLQ is not just a trash can; it is a forensic tool. It serves two critical functions:

- Audit Trail: It preserves the exact payload that failed to process, allowing your team to debug why the system rejected it.

- Recovery: It allows for manual intervention. A human can review the failed payload in the DLQ, fix the code, and manually re-queue the message for processing.

However, a DLQ is useless if you don’t know it exists. This leads to the final pillar of reliable webhook systems: Observability.

You must set up alerts for every failed delivery. If a webhook enters the DLQ, your monitoring system should ping you immediately. Without this visibility, you are flying blind, unaware that your users are missing critical updates.

Photo by RDNE Stock project on Pexels

Photo by RDNE Stock project on Pexels

Your Next Step Toward Resilience

Building a reliable webhook system is an exercise in humility. It requires accepting that the network will fail, servers will crash, and users will make mistakes. You cannot build a perfect system, but you can build a robust one.

By implementing exponential backoff with jitter, enforcing idempotency keys, and utilizing dead letter queues for failed attempts, you transform your webhooks from fragile callbacks into a reliable data pipeline.

Don’t wait for a critical customer notification to be lost in the ether. Audit your current webhook implementation today. Are you sure the receiver is replying with the correct status codes? Do you have a mechanism to handle retries? Is your data idempotent?

The silence of a failed webhook is deafening. Build the noise into your system so you never have to hear it.

Recommended External Resources

- AWS SQS Best Practices: AWS Documentation on Long Polling and Dead Letter Queues (Understanding DLQs)

- Google Cloud Pub/Sub: Idempotency and Delivery Guarantees (Concept of idempotency keys)

- Stripe API Docs: Idempotency Keys (A real-world example of a company handling retries and idempotency)

- Netlify: Understanding Webhooks and Retry Logic (A practical guide for developers)